When Google was founded, most of the internet was just text. Today, ~80% of the internets IP traffic is video. TikTok is expected to reach 1.8B monthly active users by the end of this year, everyone uses Zoom, smart cameras are being placed everywhere from our homes, to farms, factories, warehouses, and construction sites, and we’ve been ushered into a new age of creative tooling with products like Figma.

As video has become more integral to products, the ability of software developers to quickly deliver great AI experiences has lagged behind. Most companies we talk to today face these massive bottlenecks in running ML workflows at video-scale, improving ML models over time to fit their use case, and integrating those outputs into end-user applications.

That’s why we built Sieve: a cloud native platform that makes it easy to process, understand, and search video.

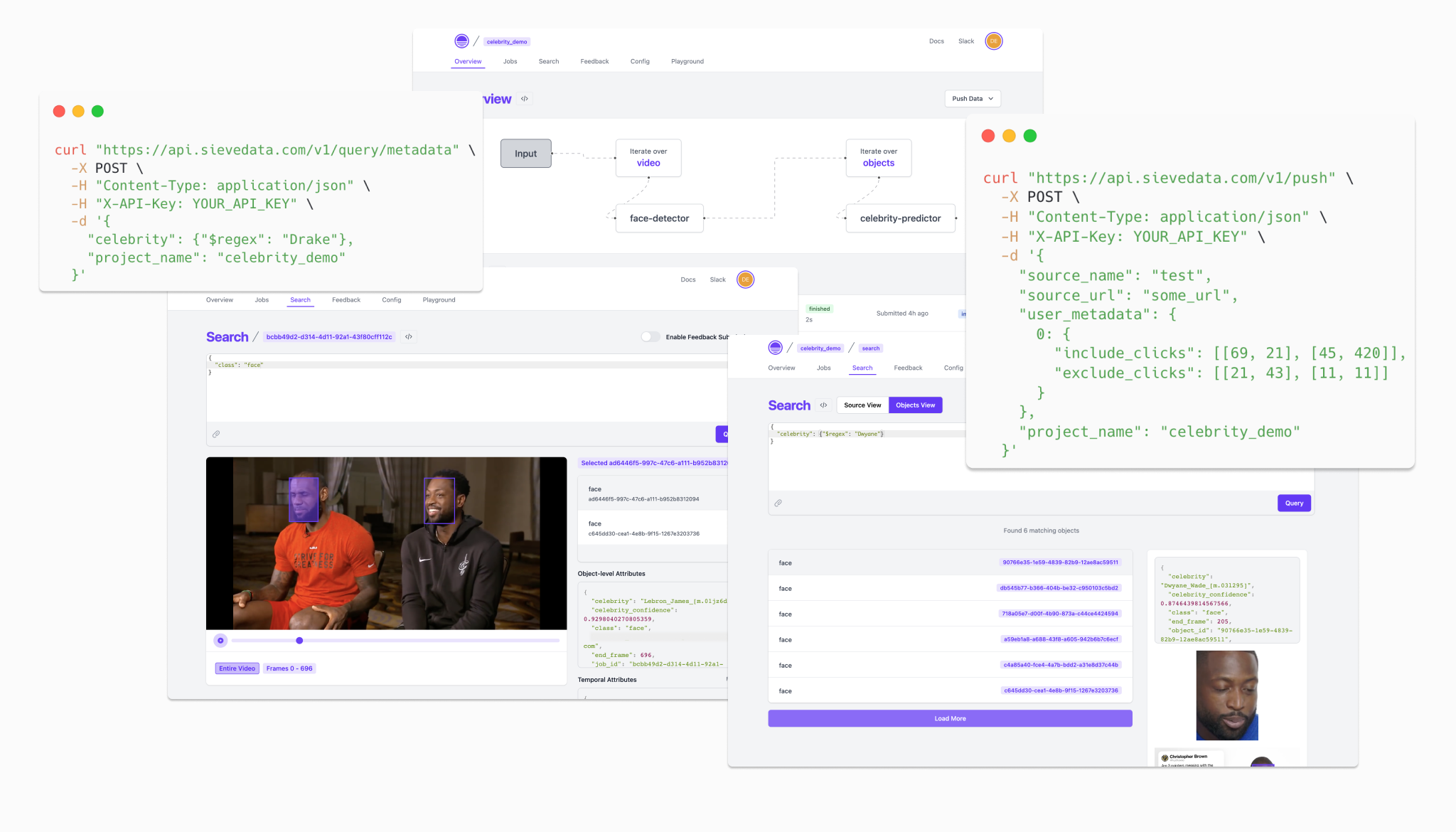

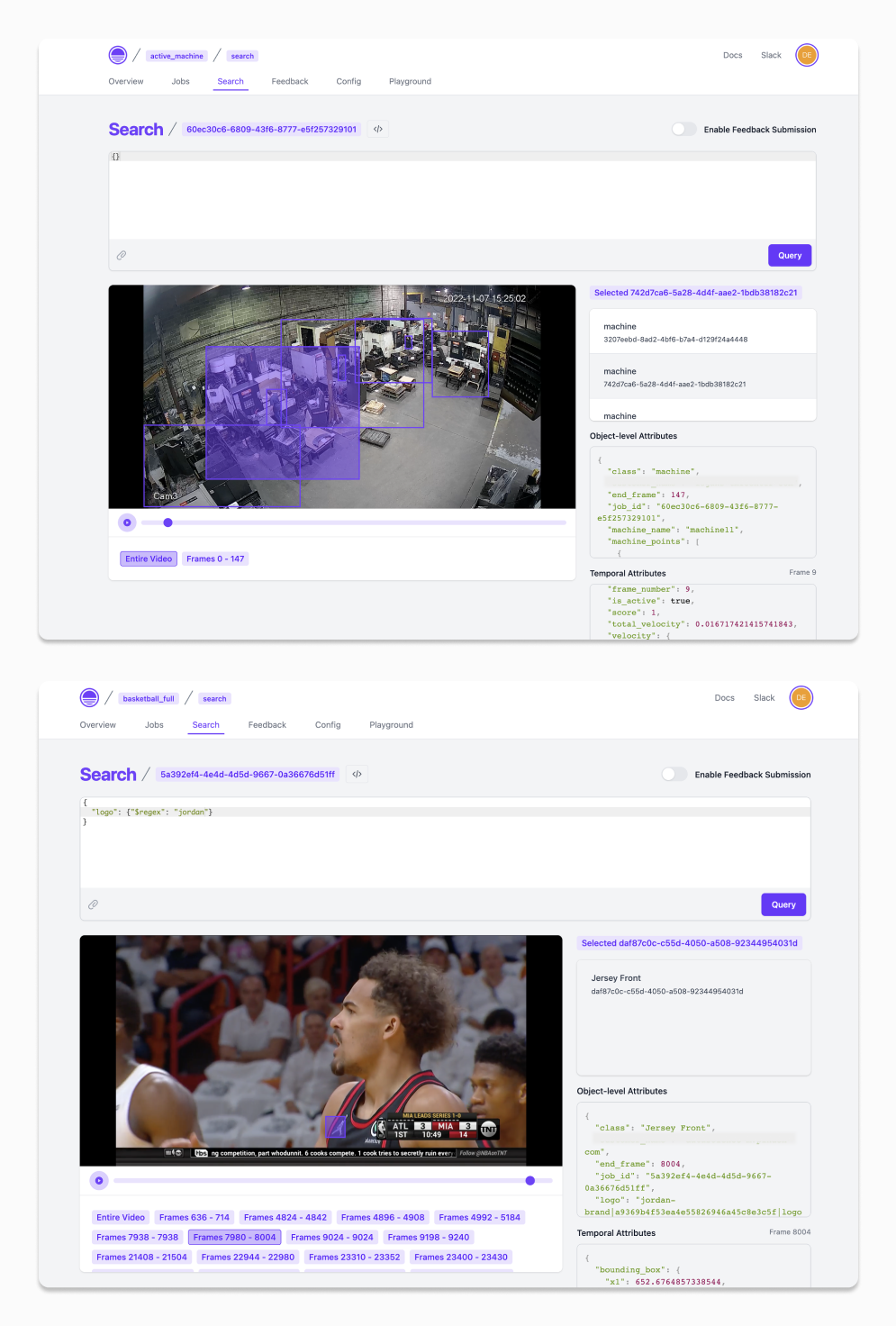

Some people use Sieve as a structured database for video. Define a workflow, run a query, integrate into an app, and submit feedback to improve models over time.

Others take querying to a whole different level. Because Sieve can expose information as a video is being processed, users can poll Sieve’s query API to build highly interactive features without setting up any separate backend.

Sieve was built while working closely with many software startups who themselves were trying to build smart features and experiences with video. Till now, the product has only been open to them to use but today we’re opening up our beta for anyone to try. Just sign up at the top right of this page.

We’re also annoucing that we've raised a ~$4M seed round led by Matrix Partners with participation from Y Combinator, Swift Ventures, AI Grant, and a wide host of angels (Lucy Guo, Eric Jang, Alfredo Andere, Melisa Tokmak, Ryan Chan, Everett Berry, Phillip Kuznetsov, JP Ren). This financing will allow us to stay laser focused on building the best products for everyone.

Please join our community, try the product, and read the docs. We know it's not perfect yet, but we're excited to learn from your feedback and build a better product together.

Also if you’re interested in the work we do, check out our jobs page or reach out directly.